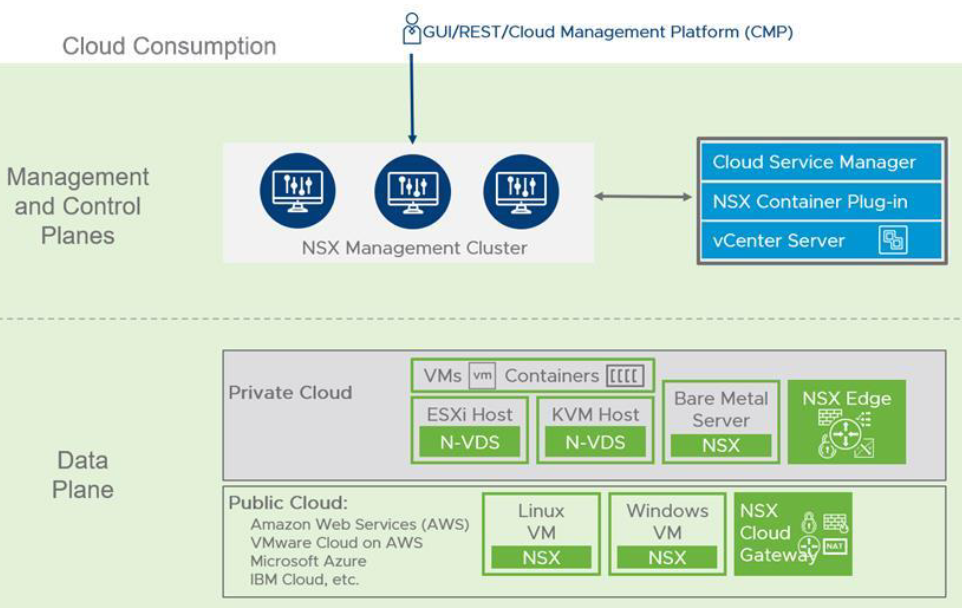

As it mentioned in Introduction to VMware NSX , NSX-T Datacenter is built on three integrated layers of components which are Management Plane, Control plane & Data plane. This architecture and separation of key roles enables scalability without impacting workloads.

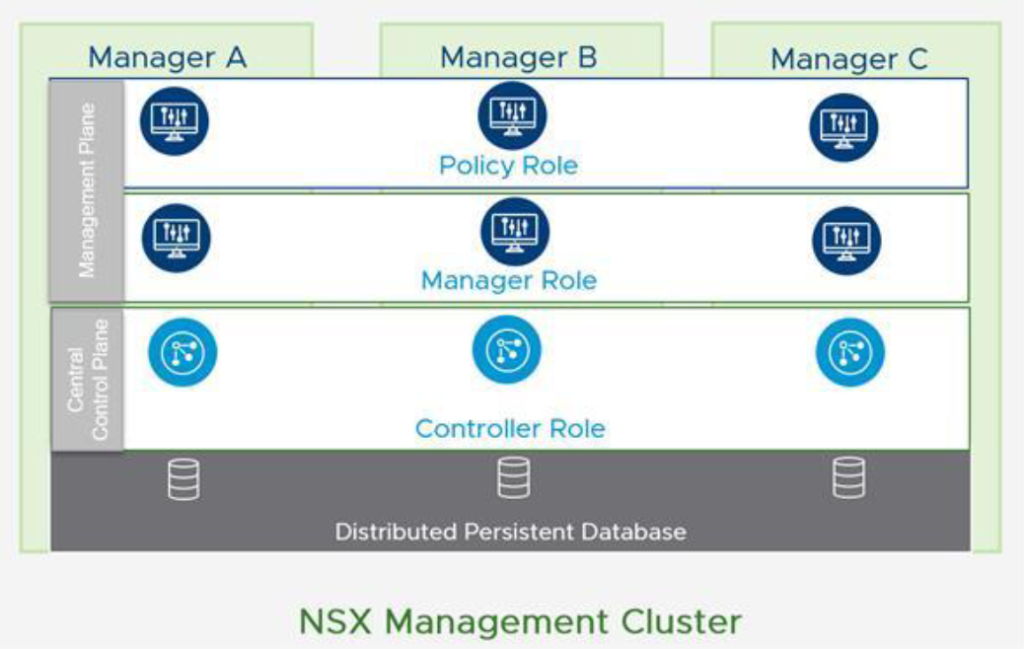

NSX-T Management cluster which built from three-node NSX-T managers controller nodes. Management plane and control plane are converged on each node. NSX managers provides Web-GUI and REST API for management purposes. This is one of the architectural difference compared to NSX-V which had to integrate into vSphere Client & vCenter server. NSX Manager is also could be consumed by Cloud Management Platform(CMP) like vRealize Automation to integrate SDN into cloud automation platforms. NSX-T Manager can also connect to vSphere infrastructure through integration with vCenter Server(Compute Manager).

Each NSX Manager virtual appliance holds Manager role , Controller role and Policy role. Requests from users and integrated systems like vRA through Web-GUI and API can be handled by each manager node in the cluster. This leads to possibility of sharing workloads across all nodes in the cluster. Policy role provides a centralized location for configuring the Networking & Security configuration that appears in Simplified UI of NSX Manager Web-GUI. This centralized management covers all workloads including VMs, containers and bare-metal servers. Manager role receives the configuration from the Policy, stores it in distributed persistence database(CorfuDB) and inject the configuration into Controller role which is part of Controller plane that resides on NSX Managers (Central Control Plane). As it is shown below, CorfuDB runs across all manager nodes and provides the same configuration view on each node.

In NSX-T Datacenter, cotrol plane is divided into Central Control Plane(CCP) and Local Control Plane(LCP). CCP operates on NSX Managers and LCP exists on transport nodes like ESXi, KVM and Edge nodes. CCP computes the runtime state of the environment based on configuration from the management plane. Then the configuration communicated to Data plane by injecting the runtime state into LCP. The split of Control plane into CCP and LCP enables NSX-T Datacenter to scale to various types of endpoints, for example hypervisors, containers and public cloud platforms.

Data plane forwards network traffic based on the configuration advertised by Control plane and is also reports back topology information to Control plane. NSX-T Datacenter supports ESXi and KVM hypervisor transport nodes, Linux bare-metal servers and VM-based or bare-metal Edge nodes. Edge nodes provides computational resource for stateful services such as NAT and VPN on Logical Routers.

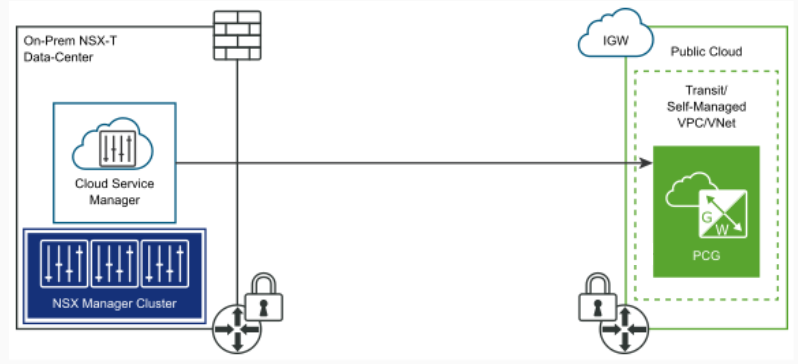

To connect on-prem virtual networking and security platform to public cloud services like AWS or Azure, Cloud Service Manager(CSM) should be used. This appliance should be installed on-prem and integrates NSX Manager cluster into cloud network engines. NSX-T Datacenter can also serve container platform with use of NSX-T Container Plugin (NCP). This plugin provides the integration between NSX-T Datacenter and container platforms like Kubernetes, OpenShift and Pivotal Cloud Foundry(PKS).

In NSX-T Deep Dive with series of blog posts we are going to walk through step-by-step implementation of NSX-T Data Center.

2 thoughts on “NSX-T Architecture & Components”