Secure File Transfer Protocol (SFTP) is a secure method for transferring files over a network. Unlike traditional FTP, which sends data in plain text, SFTP utilizes the Secure Shell (SSH) protocol to encrypt both the authentication information and the data being transferred. This encryption ensures that sensitive data remains protected during transit, making SFTP a preferred choice for secure file transfers in various environments.

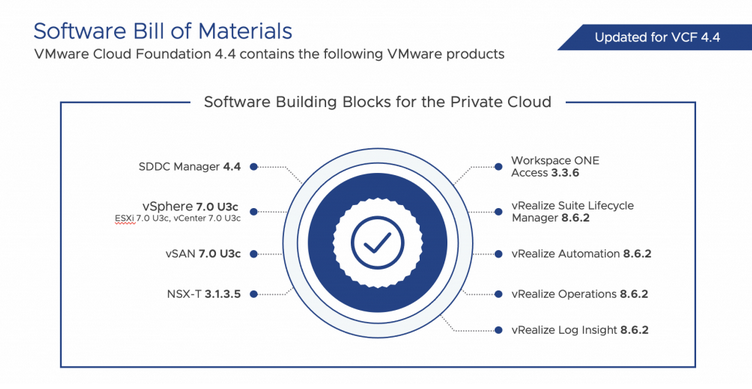

Having an SFTP server is important in a VMware environment for secure and reliable file-based backups. Components like vCenter server, NSX manager, and SDDC manager use SFTP for file-based backups. SFTP also allows for centralized backup management and remote storage, enhancing disaster recovery capabilities by safeguarding data off-site and enabling quick restoration.

In this blog post, I’ll explain step-by-step how to setup SFTP service on an Ubuntu server.

Continue reading “Setup SFTP on Ubuntu Server”