We have observed innovation, easy management, and supporting numerous features in every vSAN update. VMware announced vSAN 8U2, which contains new topology, features, and enhancements.

In this blog post, I will highlight the most crucial feature updates for Original Storage Architecture (OSA) and vSAN Express Storage Architecture (ESA) that come into three different categories:

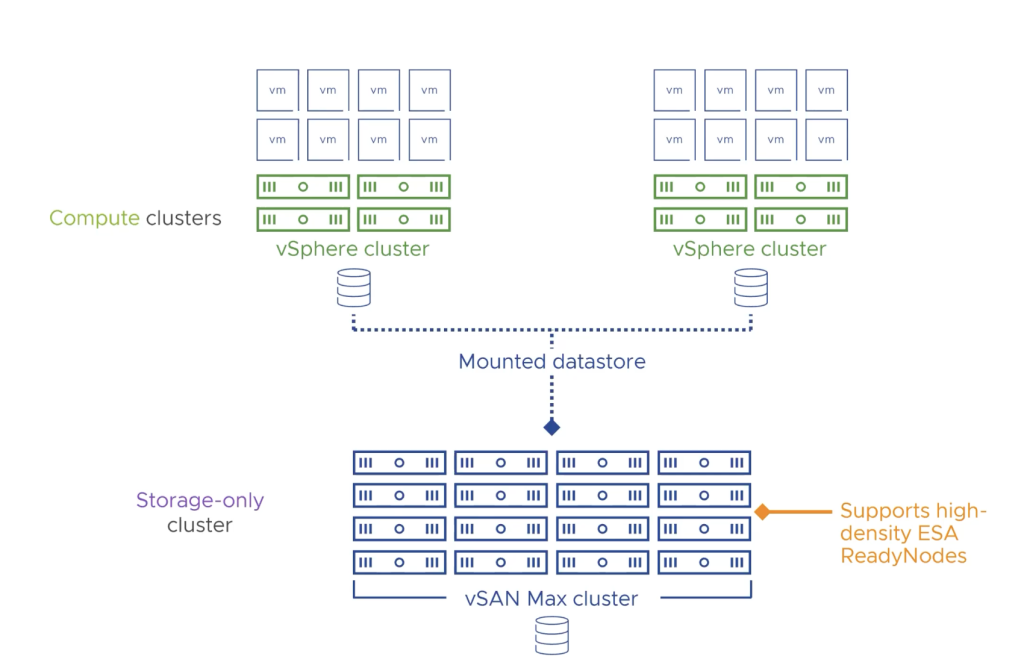

- Flexible Topologies – vSAN MAX Storage Cluster

- Core Platform Advances – Support of vSAN File Services in ESA

- Enhanced Management – ESA Prescriptive Disk Claim, Auto Policy Remediation

So let’s start with introducing vSAN’s new Disaggregated HCI offering known as vSAN MAX, which provides high performance, efficiency, and resiliency. This solution is based on vSAN ESA, and it is very easy to scale in an incremental fashion. So instead of adding compute and storage together, you can add more storage and provide multiple petabytes of capacity for a vSphere cluster. vSAN Max supports up to 360TB capacity per host, which means with a maximum of 24 nodes per cluster in vSAN MAX, you can provide 8.5-petabyte storage for vSphere clusters.

Continue reading “What’s New in vSAN 8U2”